- Some recurring conversations at the Curve about economic effects of AI.

-

The Curve was an amazing conference (thank you to Rachel Weinberg, Golden Gate, & Manifund). Among dozens of conversations & arguments, here are some things that would keep coming up:

- We need more forecasts of economic impacts.

- We need more theory of capabilities.

- We need more metrics of capabilities.

- We need more theory of offense-defense balance.

- We need more bottom-up modelling of capabilities growth.

I feel bad saying “we need,” & scolding others for the work they’re not doing, so I’ve tried to add my own very tentative best guesses about each below.

We need more forecasts of economic impacts

- We have few forecasts of the impact of strong AI.

-

A number of people have published explicit forecasts of the future trend of AI capabilities (“timelines”), but there are many fewer forecasts of the economic effects of those capabilities, i.e. the effects on GDP, consumption, employment, wages, asset prices.

The most explicit forecast which allows for strong AI is Epoch’s GATE model, see below.

- Having explicit forecasts would be very useful.

-

What do we expect AI to do to these things?

- Wages & employment, across sectors and tenure.

- The price of land, the value of the capital stock.

- Incomes across different countries.

I feel it’s like early 2020 and COVID: if we’re trying to make a decision about whether to announce a lock-down it should be based on a clear idea about the counterfactual, which includes a lot of equilibrium effects.

- Most academic forecasts assume no capabilities growth.

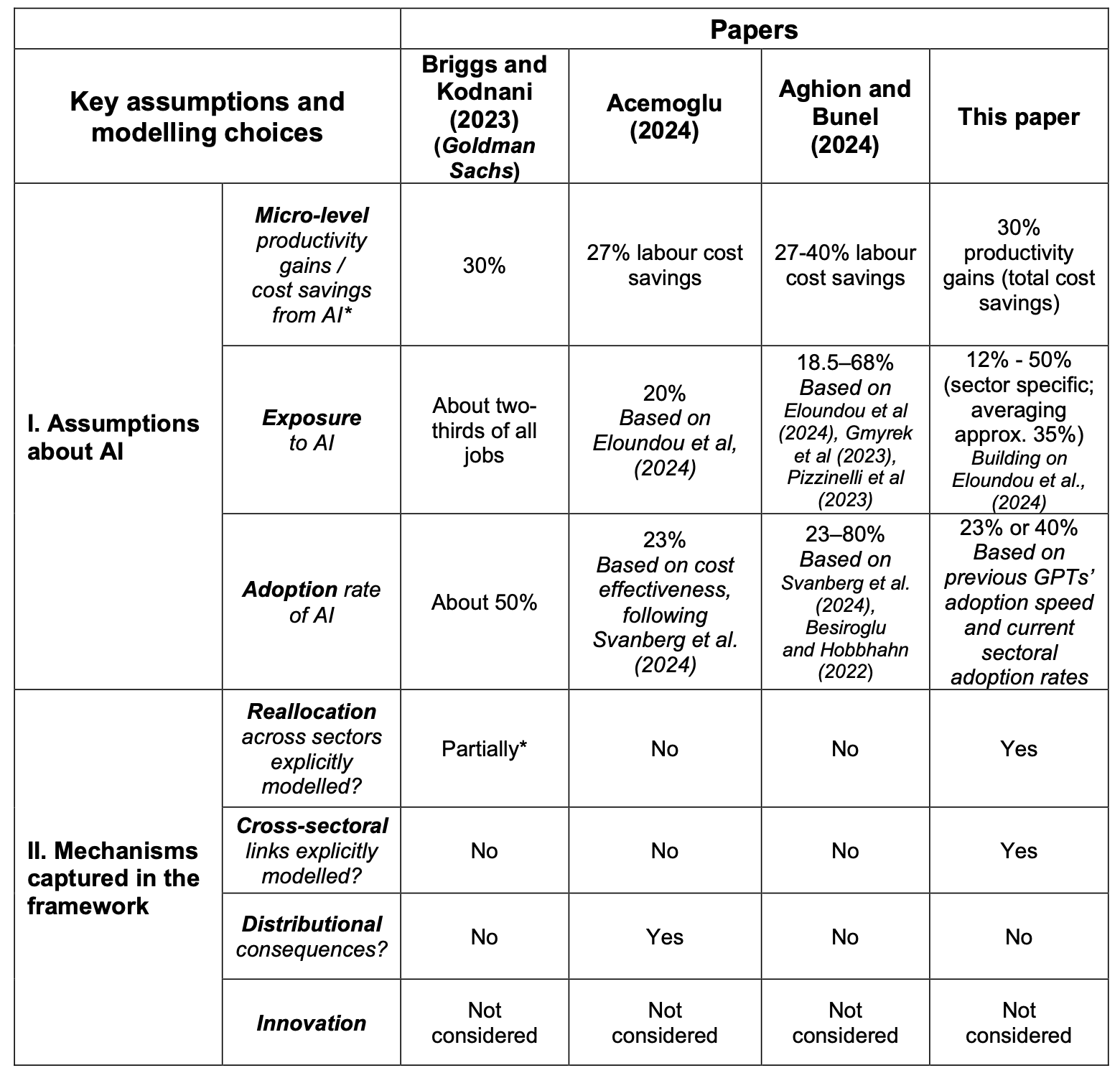

- I complained about this in my previous post: Acemoglu (2024) and Aghion and Bunel (2024) both give explicit forecasts of AI’s economic impact (0.06%/year and 1%/year respectively), but both effectively assume that AI will not get any better, they are just extrapolating out from existing capabilities.

- I know of just two very concrete economics AGI forecasts.

-

- Korinek and Suh (2024) forecast that, over 15 years, GDP triples; wages increase a little at first, then collapse when everything is automated. GDP continues to increase.

- Epoch’s GATE model (Erdil et al. (2025)) forecast full automation in 2034, by which point gross world product (GWP) has grown 10X. They forecast that wages will at first increase dramatically then, at some point after full automation is achieved, collapse.1

- Forecasting markets expect big capabilities, small impacts.

-

Forecasting markets expect rapid progress in AI capabilities. As of Oct 2025, the median Metaculus forecasts are:

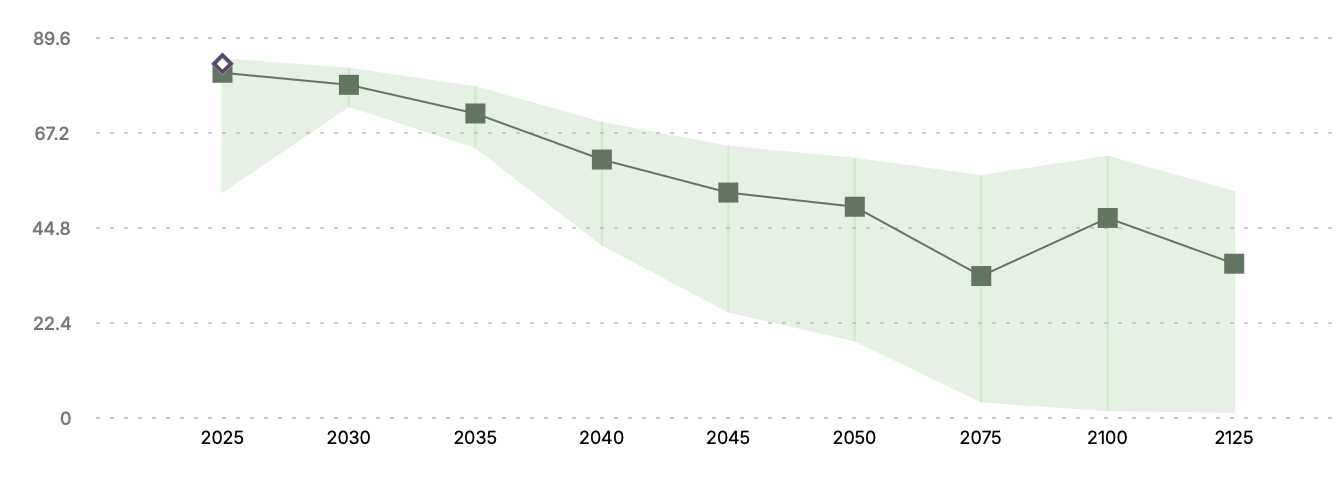

However forecasting markets also expect economic variables to remain relatively flat. Over the next 100 years Metaculus expects:.

- World productivity growth increases from 3% to 5%.

- Labor force participation falls smoothly from 84% to 36%

Both forecasts are relatively smooth over time. The smoothness could be consistent with expecting dramatic effects, but (1) uncertainty about capabilities growth; (2) disagreement among forecasters.

- The financial markets seem to expect small impacts.

-

Asset prices do not seem to anticipate dramatic effects from an intelligence explosion, though they are hard to interpret.

Chow, Halperin, and Mazlish (2024) argues that we should expect real interest rates to increase (and they haven’t). Nordhaus (2021) says that an AI singularity would predict a variety of things, especially an increasing growth rate and increasing capital share, and he says he does not find much evidence for these.

Am I missing other forecasts?

- How can we get more people to forecast?

- One idea that Anna Yelikazova and I discussed: sponsor a couple of dozen econ grad students to write 5-page very explicit forecasts, and give prizes the most compelling ones.

We need more theory of capabilities.

- We have many projects which are collecting data on AI impacts.

-

We can organize AI impacts into a waterfall, top to bottom:

- Data on AI capabilities – benchmarks that have representative tasks across the economy - e.g. GDP-val (Patwardhan et al. (2025)), APEX.

- Data on AI uplift – effect on productivity, e.g. Becker et al. (2025).

- Data on AI adoption – adoption by occupations, by industry, by demographic, E.g. (bick2024rapid?).

- Data on AI usage – what types of economic tasks are LLMs used for, e.g. Handa et al. (2025), Chatterji et al. (2025).

- Data on AI economic effects – changes in hiring and wages by occupation, e.g. Brynjolfsson, Chandar, and Chen (2025).

Each of these is relatively unopinionated, they try to canvas AI impacts in general.

- Collecting data is hard without theory.

-

I don’t think we have that many opinionated theories on how each of these should move. Theories are important because we’re expecting things to change rapidly, both due to capability growth and adoption growth. If we don’t have an explicit theory then we’re using an implicit theory.

Think of spending a lot of time & resources collecting samples of COVID, but not, at the same time, working on a theory of how epidemics evolve and who’s more susceptible.

- We don’t have many theories of AI’s impacts.

-

Here are the prominent theories of the ways in which AI is likely to be adopted:

- Informal observations about LLMs: AI researchers generally say that LLMs are relatively better at tasks that are verifiable, short-horizon, low-context, and text-based.

- Indices of task or occupation “exposure” to AI: Frey and Osborne (2013), Brynjolfsson, Mitchell, and Rock (2018), Felten, Raj, and Seamans (2018), Webb (2019), Eloundou et al. (2023). METR’s time-horizon paper (Kwa et al. (2025)) can also be interpreted as an exposure index for tasks.

- Nathan Lambert seems to feel the same way.

- Nathan Lambert’s post-curve post says “many AI obsessors are more interested in where the technology is going rather than how or what exactly it is going to be.”

We need more metrics of capabilities

- We don’t have a standard way of defining AI capabilities.

-

We say “strong AI”, “transformative AI”, “AGI”, or “ASI”.

The best concrete metric is probably METR’s time horizon index. We can then say “what happens when AI can do a one month task?”

The Forecasting Research Institute is working on a set of well-defined capability scenarios.

- My favorite metric: frontier cost-efficiency growth.

-

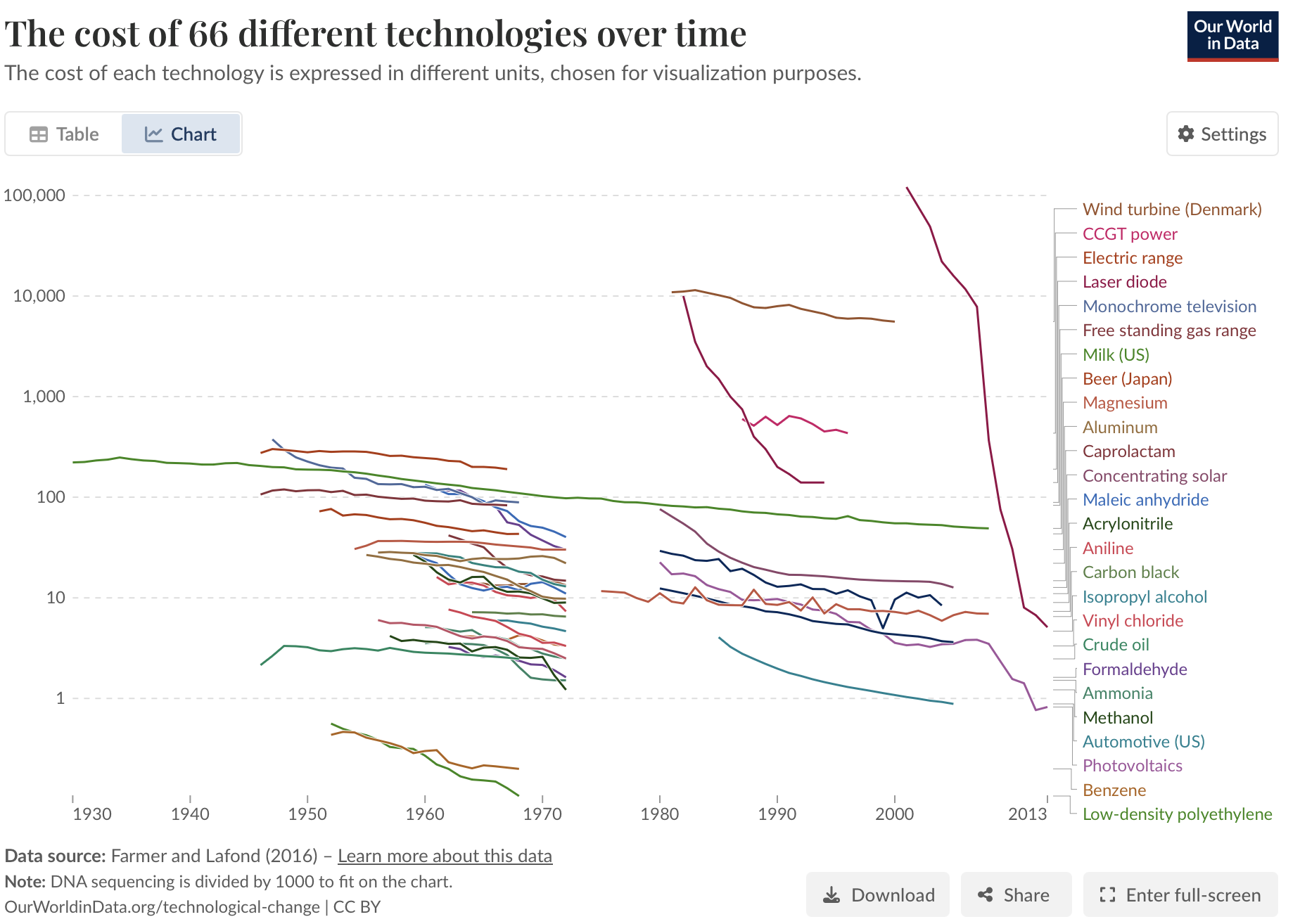

There are hundreds of cost-efficiency metrics that have been regularly increasing over decades: transistor density, corn yield, compression efficiency (see the chart on the right). When AI becomes useful then we expect these metrics to start improving more quickly. Cost-efficiency growth is a useful metric because it’s (1) unambiguous; (2) economically relevant; (3) upstream of other economic impacts like employment.

Existing historical cost-efficiency data:

- Farmer and Lafond (2016) documents progress in 53 technologies (visualized at Our World in Data), but only up to 2013.

- Sherry and Thompson (2021) document historical trends in algorithmic efficiency across a variety of algorithms.

We need more theory of the offense-defense balance

- Many discussions were about how AI will change the offense-defense balance

-

There are dozens of cases where there’s some offense-defense balance, and it’s no immediately clear how AI will affect that balance. Some examples that came up in the Curve:

- hacking

- ransomware

- spearfishing

- media manipulation

- drone assassinations

- drone warfare.

In each case it’s clear that AI could help both sides, but arguable how the equilibrium will be affected.

- We should have some common theory.

-

It seems wasteful to treat each of these problems independently, there ought to be some general principles we can apply on how AI will affect offense-defense balance.

The closest I know is Garfinkel and Dafoe (2019). The argue that when both sides get sufficiently strong then this will generally tend to favor the defender:

“we offer a general formalization of the offense-defense balance in terms of contest success functions. Simple models of ground invasions and cyberattacks that exploit software vulnerabilities suggest that, in both cases, growth in investments will favor offense when investment levels are sufficiently low and favor defense when they are sufficiently high.”

I also have a note from 2023, which argues that AI will favor the defender for “internal” properties (where human judgment is the ground truth), but favor the attacker for “external” properties (where external reality is the ground truth).

This seems an incredibly fertile area for economic theory but I have seen very little enagement from economists.

References

Footnotes

Tom Davidson has a 2021 report on Explosive Growth, and a model of takeoff speeds), but I don’t think either has a central forecast with multiple aggregate economic variables.↩︎

Citation

@online{cunningham2025,

author = {Cunningham, Tom},

title = {The {Curve} \& {Economic} {Impacts} of {AI.}},

date = {2025-10-06},

url = {tecunningham.github.io/posts/2025-10-06-the-curve.html},

langid = {en}

}